A few weeks ago I started reading reddit/r/statistics regularly. I post links to this blog there and the readers have given me some good feedback. And I have answered a few questions.

One of the most commons question I see is something like, "I have some data. Which test should I use?" When I see this question, I always have two thoughts:

1) I hate the way statistics is taught, because it gives students the impression that hypothesis testing is all about choosing the right test, and if you get it wrong the statistics wonks will yell at you, and

2) There is only one test!

Let me explain. All tests try to answer the same question: "Is the apparent effect real, or is it due to chance?" To answer that question, we formulate two hypotheses: the null hypothesis, H0, is a model of the system if the effect is due to chance; the alternate hypothesis, HA, is a model where the effect is real.

Ideally we should compute the probability of seeing the effect (E) under both hypotheses; that is P(E | H0) and P(E | HA). But formulating HA is not always easy, so in conventional hypothesis testing, we just compute P(E | H0), which is the p-value. If the p-value is small, we conclude that the effect is unlikely to have occurred by chance, which suggests that it is real.

That's hypothesis testing. All of the so-called tests you learn in statistics class are just ways to compute p-values efficiently. When computation was expensive, these shortcuts were important, but now that computation is virtually free, they are not.

And the shortcuts are often based on simplifying assumptions and approximations. If you violate the assumptions, the results can be misleading, which is why statistics classes are filled with dire warnings and students end up paralyzed with fear.

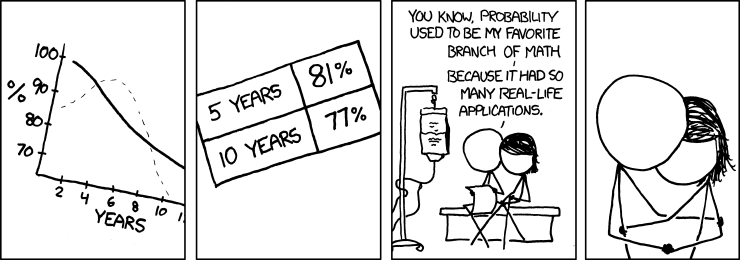

Fortunately, there is a simple alternative: simulation. If you can construct a model of the null hypothesis, you can estimate p-values just by counting. This figure shows the structure of the simulation.

The first step is to get data from the system and compute a test statistic. The result is some measure of the size of the effect, which I call "delta". For example, if you are comparing the mean of two groups, delta is the difference in the means. If you are comparing actual values with expected values, delta is a chi-squared statistic (described below) or some other measure of the distance between the observed and expected values.

The null hypothesis is a model of the system under the assumption that the apparent effect is due to chance. For example, if you observed a difference in means between two groups, the null hypothesis is that the two groups are the same.

The next step is to use the model to generate simulated data that has the sample size as the actual data. Then apply the test statistic to the simulated data.

The last step is the easiest; all you have to do is count how many times the test statistic for the simulated data exceeds delta. That's the p-value.

The last step is the easiest; all you have to do is count how many times the test statistic for the simulated data exceeds delta. That's the p-value.

As an example, let's do the Casino problem from Think Stats:

Suppose you run a casino and you suspect that a customer has replaced a die provided by the casino with a ``crooked die''; that is, one that has been tampered with to make one of the faces more likely to come up than the others. You apprehend the alleged cheater and confiscate the die, but now you have to prove that it is crooked. You roll the die 60 times and get the following results:

Value 1 2 3 4 5 6

Frequency 8 9 19 6 8 10

What is the probability of seeing results like this by chance?

The null hypothesis is that the die is fair. Under that assumption, the expected frequency for each value is 10, so the frequencies 8, 9 and 10 are not surprising, but 6 is a little funny and 19 is suspicious.

To compute a p-value, we have to choose a test statistic that measures how unexpected these results are. The chi-squared statistic is a reasonable choice: for each value we compare the expected frequency, exp, and the observed frequency, obs, and compute the sum of the squared relative differences:

def ChiSquared(expected, observed):

total = 0.0

for x, exp in expected.Items():

obs = observed.Freq(x)

total += (obs - exp)**2 / exp

return total

To compute a p-value, we have to choose a test statistic that measures how unexpected these results are. The chi-squared statistic is a reasonable choice: for each value we compare the expected frequency, exp, and the observed frequency, obs, and compute the sum of the squared relative differences:

def ChiSquared(expected, observed):

total = 0.0

for x, exp in expected.Items():

obs = observed.Freq(x)

total += (obs - exp)**2 / exp

return total

Why relative? Because the variation in the observed values depends on the expected value. Why squared? Well, squaring makes the differences positive, so they don't cancel each other when we add them up. But other than that, there is no special reason to choose the exponent 2. The absolute value would also be a reasonable choice.

For the observed frequencies, the chi-squared statistic is 20.6. By itself, this number doesn't mean anything. We have to compare it to results from the null hypothesis.

Here is the code that generates simulated data:

def SimulateRolls(sides, num_rolls):

"""Generates a Hist of simulated die rolls.

Args:

sides: number of sides on the die

num_rolls: number of times to rolls

Returns:

Hist object

"""

hist = Pmf.Hist()

for i in range(num_rolls):

roll = random.randint(1, sides)

hist.Incr(roll)

return hist

And here is the code that runs the simulations:

count = 0.

num_trials = 1000

num_rolls = 60

threshold = ChiSquared(expected, observed)

for _ in range(num_trials):

simulated = SimulateRolls(sides, num_rolls)

chi2 = ChiSquared(expected, simulated)

if chi2 >= threshold:

count += 1

pvalue = count / num_trials

print 'p-value', pvalue

Out of 1000 simulations, only 34 generated a chi-squared value greater than 20.6, so the estimated p-value is 3.4%. I would characterize that as borderline significant. Based on this result, I think the die is probably crooked, but would I have the guy whacked? No, I would not. Maybe just roughed up a little.

For this problem, I could have computed the sample distribution of the test statistic analytically and computed the p-value quickly and exactly. If I had to run this test many times for large datasets, computational efficiency might be important. But usually it's not.

And accuracy isn't very important either. Remember that the test statistic is arbitrary, and the null hypothesis often involves arbitrary choices, too. There is no point in computing an exact answer to an arbitrary question.

For most problems, we only care about the order of magnitude: if the p-value is smaller that 1/100, the effect is likely to be real; if it is greater than 1/10, probably not. If you think there is a difference between a 4.8% (significant!) and 5.2% (not significant!), you are taking it too seriously.

So the advantages of analysis are mostly irrelevant, but the disadvantages are not:

1) Analysis often dictates the test statistic; simulation lets you choose whatever test statistic is most appropriate. For example, if someone is cheating at craps, they will load the die to increase the frequency of 3 and/or 4, not 1 and/or 6. So in the casino problem the results are suspicious not just because one of the frequencies is high, but also because the frequent value is 3. We could construct a test statistic that captures this domain knowledge (and the resulting p-value would be lower).

2) Analytic methods are inflexible. If you have issues like censored data, non-independence, and long-tailed distributions, you won't find an off-the-shelf test; and unless you are a mathematical statistician, you won't be able to make one. With simulation, these kinds of issues are easy.

3) When people think of analytic methods as black boxes, they often fixate on finding the right test and figuring out how to apply it, instead of thinking carefully about the problem.

In summary, don't worry about finding the "right" test. There's no such thing. Put your effort into choosing a test statistic (one that reflects the effect you are testing) and modeling the null hypothesis. Then simulate it, and count!

Added June 24, 2011: For some additional examples, see this followup post.

For the observed frequencies, the chi-squared statistic is 20.6. By itself, this number doesn't mean anything. We have to compare it to results from the null hypothesis.

Here is the code that generates simulated data:

def SimulateRolls(sides, num_rolls):

"""Generates a Hist of simulated die rolls.

Args:

sides: number of sides on the die

num_rolls: number of times to rolls

Returns:

Hist object

"""

hist = Pmf.Hist()

for i in range(num_rolls):

roll = random.randint(1, sides)

hist.Incr(roll)

return hist

And here is the code that runs the simulations:

count = 0.

num_trials = 1000

num_rolls = 60

threshold = ChiSquared(expected, observed)

for _ in range(num_trials):

simulated = SimulateRolls(sides, num_rolls)

chi2 = ChiSquared(expected, simulated)

if chi2 >= threshold:

count += 1

pvalue = count / num_trials

print 'p-value', pvalue

Out of 1000 simulations, only 34 generated a chi-squared value greater than 20.6, so the estimated p-value is 3.4%. I would characterize that as borderline significant. Based on this result, I think the die is probably crooked, but would I have the guy whacked? No, I would not. Maybe just roughed up a little.

For this problem, I could have computed the sample distribution of the test statistic analytically and computed the p-value quickly and exactly. If I had to run this test many times for large datasets, computational efficiency might be important. But usually it's not.

And accuracy isn't very important either. Remember that the test statistic is arbitrary, and the null hypothesis often involves arbitrary choices, too. There is no point in computing an exact answer to an arbitrary question.

For most problems, we only care about the order of magnitude: if the p-value is smaller that 1/100, the effect is likely to be real; if it is greater than 1/10, probably not. If you think there is a difference between a 4.8% (significant!) and 5.2% (not significant!), you are taking it too seriously.

So the advantages of analysis are mostly irrelevant, but the disadvantages are not:

1) Analysis often dictates the test statistic; simulation lets you choose whatever test statistic is most appropriate. For example, if someone is cheating at craps, they will load the die to increase the frequency of 3 and/or 4, not 1 and/or 6. So in the casino problem the results are suspicious not just because one of the frequencies is high, but also because the frequent value is 3. We could construct a test statistic that captures this domain knowledge (and the resulting p-value would be lower).

2) Analytic methods are inflexible. If you have issues like censored data, non-independence, and long-tailed distributions, you won't find an off-the-shelf test; and unless you are a mathematical statistician, you won't be able to make one. With simulation, these kinds of issues are easy.

3) When people think of analytic methods as black boxes, they often fixate on finding the right test and figuring out how to apply it, instead of thinking carefully about the problem.

In summary, don't worry about finding the "right" test. There's no such thing. Put your effort into choosing a test statistic (one that reflects the effect you are testing) and modeling the null hypothesis. Then simulate it, and count!

Added June 24, 2011: For some additional examples, see this followup post.