So you can imagine my reaction to a click-bait headline like, "Does Engineering Education Breed Terrorists?", which appeared recently in the Chronicle of Higher Education.

The article presents results from a new book, Engineers of Jihad: The Curious Connection Between Violent Extremism and Education, by Diego Gambetta and Steffen Hertog. If you don't want to read the book, it looks like you can get most of the results from this working paper, which is free to download.

I have not read the book, but I will summarize results from the paper.

Engineers are over-represented

Gambetta and Hertog compiled a list of 404 "members of violent Islamist groups". Of those, they found educational information on 284, and found that 196 had some higher education. For 178 of those cases, they were able to determine the subject of study, and of those, the most common fields were engineering (78), Islamic studies (34), medicine (14), and economics/business (12).

So the representation of engineers among this group is 78/178, or 44%. Is that more than we should expect? To answer that, the ideal reference group would be a random sample of males from the same countries, with the same distribution of ages, selecting people with some higher education.

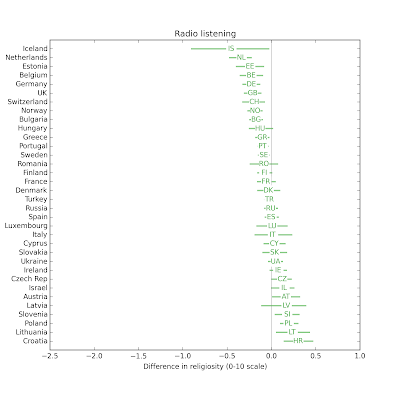

It is not easy to assemble a reference sample like that, but Gambetta and Hertog approximate it with data from several sources. Their Table 4 summarizes the results, broken down by country:

In every country, the fraction of engineers in the sample of violent extremists is higher than the fraction in the reference group (with the possible exceptions of Singapore and Saudi Arabia).

As always in studies like this, there are methodological issues that could be improved, but taking the results at face value, it looks like people who studied engineering are over-represented among violent extremists by a substantial factor -- Gambetta and Hertog put it at "two to four times the size we would expect [by random selection]".

Why engineers?

In the second part of the paper, Gambetta and Hertog turn to the question of causation; as they formulate it, "we will try to explain only why engineers became more radicalized than people with other degrees".

They consider four hypotheses:

1) "Random appearance of engineers as first movers, followed by diffusion through their network", which means that if the first members of these groups were engineers by chance, and if they recruited primarily through their social networks, and if engineers are over-represented in those networks, the observed over-representation might be due to historical accident.

2) "Selection of engineers because of their technical skills"; that is, engineers might be over-represented because extremist groups recruit them specifically.

3) "Engineers' peculiar cognitive traits and dispositions"; which includes both selection and self-selection; that is, extremist groups might recruit people with the traits of engineers, and engineers might be more likely to have traits that make them more likely to join extremist groups. [I repeat "more likely" as a reminder that under this hypothesis, not all engineers have the traits, and not all engineers with the traits join extremist groups.]

4) "The special social difficulties faced by engineers in Islamic countries"; which is the hypothesis that (a) engineering is a prestigious field of study, (b) graduates expect to achieve high status, (c) in many Islamic countries, their expectations are frustrated by lack of economic opportunity, and (d) the resulting "frustrated expectations and relative deprivation" explain why engineering graduates are more likely to become radicalized.

Their conclusion is that 1 and 2 "do not survive close scrutiny" and that the observed over-representation is best explained by the interaction of 3 and 4.

My conclusions

Here is my Bayesian interpretation of these hypotheses and the arguments Gambetta and Hertog present:

1) Initially, I didn't find this hypothesis particularly plausible. The paper presents weak evidence against it, so I find it less plausible now.

2) I find it very plausible that engineers would be recruited by extremist groups, not only for specific technical skills but also for their general ability to analyze systems, debug problems, design solutions, and work on teams to realize their designs. And once recruited, I would expect these skills to make them successful in exactly the ways that would cause them to appear in the selected sample of known extremists. The paper provides only addresses a narrow version of this hypothesis (specific technical skills), and provides only weak evidence against it, so I still find it highly plausible.

3) Initially I found it plausible that there are character traits that make a person more likely to pursue engineering and more likely to join and extremist group. This hypothesis could explain the observed over-representation even if both of those connections are weak; that is, even if few of the people who have the traits pursue engineering and even fewer join extremist groups. The paper presents some strong evidence for this hypothesis, so I find it quite plausible.

4) My initial reaction to this hypothesis is that the causal chain sounds fragile. And even if true, it is so hard to test, it verges on being unfalsifiable. But Gambetta and Hertog were able to do more work with it than I expected, so I grudgingly upgrade it to "possible".

In summary, my interpretation of the results differs slightly from the authors': I think (2) and (3) are more plausible than (3) and (4).

But overall I think the paper is solid: it starts with an intriguing observation, makes good use of data to refine the question and to evaluate alternative hypotheses, and does a reasonable job of interpreting the results.

So at this point, you might be wondering "What about engineering education? Does it breed terrorists, like the headline said?"

Good question! But Gambetta and Hertog have nothing to say about it. They did not consider this hypothesis, and they presented no evidence in favor or against. In fact, the phrase "engineering education" does not appear in their 90-page paper except in the title of a paper they cite.

Does bad journalism breed click bait?

In this case, the author of the article, Dan Berrett, might not be the guilty party. He does a good job of summarizing Gambetta and Hertog's research. He presents summaries of a related paper on the relationship between education and extremism, and a related book. He points out some limitations of the study design. So far, so good.

The second half of the article is weaker, where Berrett reports speculation from researchers farther and farther from the topic.

For example, Donna M. Riley at Virginia Tech suggests that traditional [engineering] programs "reinforce positivist patterns of thinking" because "general-education courses [...] are increasingly being narrowed" and "engineers spend almost all their time with the same set of epistemological rules."

Berrett also quotes a 2014 study of American students in four engineering programs (MIT, UMass Amherst, Olin, and Smith), which concludes, "Engineering education, fosters a culture of disengagement that defines public welfare concerns as tangential to what it means to practice engineering."

But even if these claims are true, they are about American engineering programs in 2010s. They are unlikely to explain the radicalization of the extremists in the study, who were educated in Islamic countries in the "mid 70s to late 90s".

At this point there is no reason to think that engineering education contributed to the radicalization of the extremists in the sample. Gambetta and Hertog don't consider the hypothesis, and present no evidence that supports it. To their credit, they "wouldn’t presume to make curricular recommendations to [engineering] educators."

In summary, Gambetta and Hertog provide several credible explanations, supported by evidence, that could explain the observed over-representation. Speculating on other causes, assuming they are true—and then providing prescriptions for American engineering education—is gratuitous. And suggesting in a headline that engineering education causes terrorism is hack journalism.

We have plenty of problems

Traditional engineering programs are broken in a lot of ways. That's why Olin College was founded, and why I wanted to work here. I am passionate about engineering education and excited about the opportunities to make it better. Furthermore, some of the places we have the most room for improvement are exactly the ones highlighted in Derrett's article.

Yes, the engineering curriculum should "emphasize human needs, contexts, and interactions", as the

executive director of the American Society for Engineering Education (ASEE) suggests. As he explains, "We want engineers to understand what motivates humans, because that’s how you satisfy human needs. You have to understand people as people."

And yes, we want engineers "who recognize needs, design solutions and engage in creative enterprises for the good of the world". That's why we put it in Olin College's mission statement.

We may not be doing these things very well, yet. We are doing our best to get better.

In the meantime, it is premature to add "preventing terrorism" to our list of goals. We don't know the causes of terrorism, we have no reason to think engineering education is one of them, and even if we did, I'm not sure we would know what to do about it.

Debating changes in engineering education is hard enough. Bringing terrorism into it—without evidence or reason—doesn't help.