I am collaborating with a criminologist who studies recidivism. In the context of crime statistics, a recidivist is a convicted criminal who commits a new crime post conviction. Statistical studies of recidivism are used in parole hearings to assess the risks of releasing a prisoner. This application of statistics raises questions that go to the foundations of probability theory.

Specifically, assessing risk for an individual is an example of a "single-case probability", a well-known problem in the philosophy of probability. For example, we would like to be able to make a statement like, "If released, there is a 55% probability that Mr. Smith will be charged with another crime within 10 years." But how could we estimate a probability like that, and what would it mean?

I suggest we answer these questions in three steps. The first is to choose a "reference class"; the second is to estimate the probability of recidivism in the reference class; the third is to interpret the result as it applies to Mr. Smith.

For example, if the reference class includes all people convicted of the same crime as Mr. Smith, we could find a sample of that population and compute the rate of recidivism in the sample. If 55% of the sample were recidivists, we might claim that Mr. Smith has a 55% probability of reoffending.

Let's look at those steps in more detail:

1)

The reference class problem For any individual offender, there are an unbounded number of reference classes we might choose. For example, if we consider characteristics like age, marital status, and severity of crime, we might form a reference class using any one of those characteristics, any two, or all three. As the number of characteristics increases, the number of possible classes grows exponentially (and I mean that literally, not as a sloppy metaphor for "very fast").

Some reference classes are preferable to others; in general, we would like the people in each class to be as similar as possible to each other, and the classes to be as different as possible from each other. But there is no objective procedure for choosing the "right" reference class.

2)

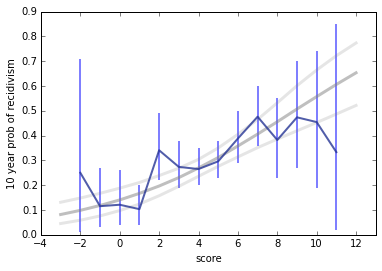

Sampling and estimation Assuming we have chosen a reference class, we would like to estimate the proportion of recidivists in the class. First, we have to define recidivism in a way that's measurable. Ideally we would like to know whether each member of the class commits another crime, but that's not possible. Instead, recidivism is usually defined to mean that the person is either charged or convicted of another crime.

If we could enumerate the members of class and count recidivists and non-, we would know the true proportion, but normally we can't do that. Instead we choose a sampling process intended to select a representative sample, meaning that every member of the class has the same probability of appearing in the sample, and then use the observed proportion in the sample to estimate the true proportion in the class.

3)

Interpretation Suppose we agree on a reference class, C, a sampling process, and an estimation process, and estimate that the true proportion of recidivists in C is 55%. What can we say about Mr. Smith?

As my collaborator has demonstrated, this question is a topic of ongoing debate. Among practitioners in the field, some take the position that "the probability of recidivism for an individual offender will be the same as the observed recidivism rate for the group to which he most closely belongs." (

Harris and Hanson 2004). On this view, we would conclude that Mr. Smith has a 55% chance of reoffending.

Others take the position that this claim is nonsense because it could never be confirmed or denied; whether Mr. Smith goes on to commit another crime or not, neither outcome supports or refutes the claim that the probability was 55%. On this view, probability cannot be meaningfully applied to a single event.

To understand this view, consider an analogy suggested by my colleague Rehana Patel: Suppose you estimate that the median height of people in class C is six feet. You could not meaningfully say that the median height of Mr. Smith is six feet. Only the class has a median height, individuals do not. Similarly, only the class has a proportion of recidivists; individuals do not.

And the answer is...

At this point I have framed the problem and tried to state the opposing views clearly. Now I will explain my own view and try to justify it.

I think it is both meaningful and useful to say, in the example, that Mr. Smith has a 55% chance of offending again. Contrary to the view that no possible outcome supports or refutes this claim, Bayes's theorem tells us otherwise. Suppose we consider two hypotheses:

H55: Mr. Smith has a 55% chance of reoffending.

H45: Mr. Smith has a 45% chance of reoffending.

If Mr. Smith does in fact commit another crime, this datum supports H55 with a Bayes factor of (55)/(45) = 1.22. And if he does not, that datum supports H45 by the same factor. In either case it is weak evidence, but nevertheless it is evidence, which means that H55 is a meaningful claim that can be supported or refuted by data. The same argument applies if there are more than two discrete hypotheses or a continuous set of hypotheses.

Furthermore, there is a natural operational interpretation of the claim. If we consider some number, n, of individuals from class C, and estimate that each of them has probability, p, of reoffending, we can compute a predictive distribution for k, the number who reoffend. It is just the

binomial distribution of k with parameters p and n:

For example, if n=100 and p=55, the most likely value of k is 55, and the probability that k=55 is about 8%. As far as I know, no one has a problem with that conclusion.

But if there is no philosophical problem with the claim, "Of these 100 members of class C, the probability that 55 of them will reoffend is 8%", there should be no special problem when n=1. In that case we would say, "Of these 1 members of class C, the probability that 1 will reoffend is 55%." Granted, that's a funny way to say it, but that's a grammatical problem, not a philosophical one.

Now let me address the challenge of the height analogy. I agree that Mr. Smith does not have a median height; only groups have medians. However, if the median height in C is six feet, I think it is correct to say that Mr. Smith has a 50% chance of being taller than six feet.

That might sound strange; you might reply, "Mr. Smith's height is a deterministic physical quantity. If we measure it repeatedly, the result is either taller than six feet every time, or shorter every time. It is not a random quantity, so you can't talk about its probability."

I think that reply is mistaken, because it leaves out a crucial step:

1) If we choose a random member of C and measure his height, the result is a random quantity, and the probability that it exceeds six feet is 50%.

2) We can consider Mr. Smith to be a randomly-chosen member of C, because part of the notion of a reference class is that we consider the members to be interchangeable.

3) Therefore Mr. Smith's height is a random quantity and we can make probabilistic claims about it.

My use of the word "consider" in the second step is meant to highlight that this step is a modeling decision: if we choose to regard Mr. Smith as a random selection from C, we can treat his characteristics as random variables. The decision not to distinguish among the members of the class is part of what it means to choose a reference class.

Finally, if the proportion of recidivists in C is 55%, the probability that a random member of C will reoffend is 55%. If we consider Mr. Smith to be a random member of C, his probability of reoffending is 55%.

Is this useful?

I have argued that it is meaningful to claim that Mr. Smith has a 55% probability of recidivism, and addressed some of the challenges to that position.

I also think that claims like this are useful because they guide decision making under uncertainty. For example, if Mr. Smith is being considered for parole, we have to balance the costs and harms of keeping him in prison with the possible costs and harms of releasing him. It is probably not possible to quantify all of the factors that should be taken into consideration in this decision, but it seems clear that the probability of recidivism is an important factor.

Furthermore, this probability is most useful if expressed in absolute, as opposed to relative, terms. If we know that one prisoner has a higher risk than another, that provides almost no guidance about whether either should be released. But if one has a probability of 10% and another 55% (and those are realistic numbers for some crimes) those values could be used as part of a risk-benefit analysis, which would usefully inform individual decisions, and systematically yield better outcomes.

What about the Bayesians and the Frequentists?

When I started this article, I thought the central issue was going to be the difference between the Frequentist and Bayesian interpretations of probability. But I came to the conclusion that this distinction is mostly irrelevant.

Considering the three steps of the process again:

1)

Reference class: Choosing a reference class is equally problematic under either interpretation of probability; there is no objective process that identifies the right, or best, reference class. The choice is subjective, but that is not to say arbitrary. There are reasons to prefer one model over another, but because there are multiple relevant criteria, there is no unique best choice.

2)

Estimation: The estimation step can be done using frequentist or Bayesian methods, and there are reasons to prefer one or the other. Some people argue that Bayesian methods are more subjective because they depend on a prior distribution, but I think both approaches are equally subjective; the only difference is whether the priors are explicit. Regardless, the method you use to estimate the proportion of recidivists in the reference class has no bearing on the third step.

3)

Interpretation: In my defense of the claim that the proportion of recidivists in the reference class is the probability of recidivism for the individual, I used Bayes's theorem, which is a universally accepted law of probability, but I did not invoke any particular interpretation of probability.

I argued that we could treat an unknown quantity as a random variable. That idea is entirely unproblematic under Bayesianism, but less obviously compatible with frequentism. Some sources claim that frequentism is specifically incompatible with single-case probabilities.

For example

the Wikipedia article on probability interpretations claims that "Frequentists are unable to take this approach [a propensity interpretation], since relative frequencies do not exist for single tosses of a coin, but only for large ensembles or collectives."

I don't agree that assigning a probability to a single case is a special problem for frequentism. Single case probabilities seem hard because they make the choice of the reference class more difficult. But choosing a reference class is hard under any interpretation of probability; it is not a special problem for frequentism.

And once you have chosen a reference class, you can estimate parameters of the class and generate predictions for individuals, or groups, without commitment to a particular interpretation of probability.

[For more about alternative interpretations of probability, and how they handle single-case probabilities, see

this article in the Stanford Encyclopedia of Philosophy, especially the section on frequency interpretations. As I read it, the author agrees with me that (1) the problem of the single case relates to choosing a reference class, not attributing probabilities to individuals, and (2) in choosing a reference class, there is no special problem with the single case that is not also a problem in other cases. If there is any difference, it is one of degree, not kind.]

Summary

In summary, the assignment of a probability to an individual depends on three subjective choices:

1) The reference class,

2) The prior used for estimation,

3) The modeling decision to regard an individual as a random sample with n=1.

You can think of (3) as an additional choice or as part of the definition of the reference class.

These choices are subjective but not arbitrary; that is, there are justified reasons to prefer one over others, and to accept some as good enough for practical purposes.

Finally, subject to those choices, it is meaningful and useful to make claims like "Mr. Smith has a 55% probability of recidivism".

Q&A

1) Isn't it dehumanizing to treat an individual as if he were an interchangeable, identical member of a reference class? Every human is unique; you can't treat a person like a number!

I conjecture that everyone who applies quantitative methods to human endeavors has heard a complaint like this. If the intended point is, "You should never apply quantitative models to humans," I disagree. Everything we know about the world, including the people in it, is mediated by models, and all models are based on simplifications. You have to decide what to include and what to leave out. If your model includes the factors that matter and omits the ones that don't, it will be useful for practical purposes. If you make bad modeling decisions, it won't.

But if the intent of this question is to say, "Think carefully about your modeling decisions, validate your models as much as practically possible, and consider the consequences of getting it wrong," then I completely agree.

Model selection has consequences. If we fail to include a relevant factor (that is, one that has predictive power), we will treat some individuals unfairly and systematically make decisions that are less good for society. If we include factors that are not predictive, our predictions will be more random and possibly less fair.

And those are not the only criteria. For example, if it turns out that a factor, like race, has predictive power, we might choose to exclude it from the model anyway, possibly decreasing accuracy in order to avoid a particularly unacceptable kind of injustice.

So yes, we should think carefully about model selection, but no, we should not exclude humans from the domain of statistical modeling.

2) Are you saying that everyone in a reference class has the same probability of recidivism? That can't be true; there must be variation within the group.

I'm saying that an individual only has a probability

AS a member of a reference class (or, for the philosophers,

qua a member of a reference class). You can't choose a reference class, estimate the proportion for the class, and then speculate about different probabilities for different members of the class. If you do, you are just re-opening the reference class question.

To make that concrete, suppose there is a standard model of recidivism that includes factors A, B, and C, and excludes factors D, E, and F. And suppose that the model estimates that Mr. Smith's probability of recidivism is 55%.

You might be tempted to think that 55% is the average probability in the group, and the probability for Mr. Smith might be higher or lower. And you might be tempted to adjust the estimate for Mr. Smith based on factor D, E, or F.

But if you do that, you are effectively replacing the standard model with a new model that includes additional factors. In effect, you are saying that you think the standard model leaves out an important factor, and would be better if it included more factors.

That might be true, but it is a question of model selection, and should be resolved by considering model selection criteria.

It is not meaningful or useful to talk about differences in probability among members of a reference class. Part of the definition of reference class is the commitment to treat the members as equivalent.

That commitment is a modeling decision, not a claim about the world. In other words, when we choose a model, we are not saying that we think the model captures everything about the world. On the contrary, we are explicitly acknowledging that it does not. But the claim (or sometimes the hope) is that it captures enough about the world to be useful.

3) What happened to the problem of the single case? Is it a special problem for frequentism? Is it a special problem at all?

There seems to be some confusion about whether the problem of the single case relates to choosing a reference class (my step 1) or attributing a probability to an individual (step 3).

I have argued that it does not relate to step 3. Once you have chosen a reference class and estimated its parameters, there is no special problem in applying the result to the case of n=1, not under frequentism or any other interpretation I am aware of.

During step 1, single case predictions might be more difficult, because the choice of reference class is less obvious or people might be less likely to agree. But there are exceptions of both kinds: for some single cases, there might be an easy consensus on an obvious good reference class; for some non-single cases, there are many choices and no consensus. In all cases, the choice of reference class is subjective, but guided by the criteria of model choice.

So I think the single case problem is just an instance of the reference class problem, and not a special problem at all.